This is interesting stuff - but like many other similar theories, it strikes me as highly anthropocentric, and in a universe as large and unknown to us as ours, it's hubris to think we are the most important entities in the universe (or multiverse).

David Deutsch And The Beginning of Infinity

Thursday, August 18, 2011 at 11:00 AM EDTWe’re talking about the scientific revolution and humanity’s place in the universe with David Deutsch, Oxford don who’s been called the founding father of quantum computing.

Quantum computing genius and Oxford don David Deutsch is a thinker of such scale and audaciousness he can take your breath away. His bottom line is simple and breathtaking all at once.This composite image provided by NASA, shows a galaxy where a recent supernova probably resulted in a black hole in the bright white dot near the bottom middle of the picture. (AP)

It’s this: human beings are the most important entities in the universe. Or as Deutsch might have it, in the “multiverse.” For eons, little changed on this planet, he says. Progress was a joke. But once we got the Enlightenment and the scientific revolution, our powers of inquiry and discovery became infinite. Without limit.

This hour On Point: David Deutsch and the beginning of infinity.

-Tom AshbrookGuests

David Deutsch, quantum physicist and philosopher and author of The Beginning of Infinity.

From Tom’s Reading List

The New Scientist “One of the most remarkable features of science is the contrast between the enormous power of its explanations and the parochial means by which we create them. No human has ever visited a star, yet we look at dots in the sky and know they are distant white-hot nuclear furnaces. Physically, that experience consists of nothing more than brains responding to electrical impulses from our eyes –; which can detect light only when it is inside them. That it was emitted far away and long ago are not things we experience. We know them only from theory.”

The New York Times “David Deutsch’s “Beginning of Infinity” is a brilliant and exhilarating and profoundly eccentric book. It’s about everything: art, science, philosophy, history, politics, evil, death, the future, infinity, bugs, thumbs, what have you. And the business of giving it anything like the attention it deserves, in the small space allotted here, is out of the question. But I will do what I can.”

TED Talk “People have always been “yearning to know” – what the stars are; cavemen probably wanted to know how to draw better. But for the better part of human experience, we were in a “protracted stagnation” – we wished for, and failed, in progress.”

Excerpt From The Beginning of Infinity

Introduction

Progress that is both rapid enough to be noticed and stable enough to continue over many generations has been achieved only once in the history of our species. It began at approximately the time of the scientific revolution, and is still under way. It has included improvements not only in scientific understanding, but also in technology, political institutions, moral values, art, and every aspect of human welfare.

Whenever there has been progress, there have been influential thinkers who denied that it was genuine, that it was desirable, or even that the concept was meaningful. They should have known better. There is indeed an objective difference between a false explanation and a true one, between chronic failure to solve a problem and solving it, and also between wrong and right, ugly and beautiful, suffering and its alleviation – and thus between stagnation and progress in the fullest sense.

In this book I argue that all progress, both theoretical and practical, has resulted from a single human activity: the quest for what I call good explanations. Though this quest is uniquely human, its effectiveness is also a fundamental fact about reality at the most impersonal, cosmic level – namely that it conforms to universal laws of nature that are indeed good explanations. This simple relationship between the cosmic and the human is a hint of a central role of people in the cosmic scheme of things.

Must progress come to an end – either in catastrophe or in some sort of completion – or is it unbounded? The answer is the latter. That unboundedness is the ‘infinity’ referred to in the title of this book. Explaining it, and the conditions under which progress can and cannot happen, entails a journey through virtually every fundamental field of science and philosophy. From each such field we learn that, although progress has no necessary end, it does have a necessary beginning: a cause, or an event with which it starts, or a necessary condition for it to take off and to thrive. Each of these beginnings is ‘the beginning of infinity’ as viewed from the perspective of that field. Many seem, superficially, to be unconnected. But they are all facets of a single attribute of reality, which I call the beginning of infinity.

The Reach of Explanations

Behind it all is surely an idea so simple, so beautiful, that when we grasp it — in a decade, a century, or a millennium — we will all say to each other, how could it have been otherwise?To unaided human eyes, the universe beyond our solar system looks like a few thousand glowing dots in the night sky, plus the faint, hazy streaks of the Milky Way. But if you ask an astronomer what is out there in reality, you will be told not about dots or streaks, but about stars: spheres of incandescent gas millions of kilometres in diameter and light years away from us. You will be told that the sun is a typical star, and looks different from the others only because we are much closer to it — though still some 150 million kilometres away. Yet, even at those unimaginable distances, we are confident that we know what makes stars shine: you will be told that they are powered by the nuclear energy released by transmutation — the conversion of one chemical element into another (mainly hydrogen into helium).

~John Archibald Wheeler, Annals of the New York Academy of Sciences, 480 (1986)

Some types of transmutation happen spontaneously on Earth, in the decay of radioactive elements. This was first demonstrated in 1901, by the physicists Frederick Soddy and Ernest Rutherford, but the concept of transmutation was ancient. Alchemists had dreamed for centuries of transmuting ‘base metals’, such as iron or lead, into gold. They never came close to understanding what it would take to achieve that, so they never did so. But scientists in the twentieth century did. And so do stars, when they explode as supernovae. Base metals can be transmuted into gold by stars, and by intelligent beings who understand the processes that power stars, but by nothing else in the universe.

As for the Milky Way, you will be told that, despite its insubstantial appearance, it is the most massive object that we can see with the naked eye: a galaxy that includes stars by the hundreds of billions, bound by their mutual gravitation across tens of thousands of light years. We are seeing it from the inside, because we are part of it. You will be told that, although our night sky appears serene and largely changeless, the universe is seething with violent activity. Even a typical star converts millions of tonnes of mass into energy every second, with each gram releasing as much energy as an atom bomb. You will be told that within the range of our best telescopes, which can see more galaxies than there are stars in our galaxy, there are several supernova explosions per second, each briefly brighter than all the other stars in its galaxy put together. We do not know where life and intelligence exist, if at all, outside our solar system, so we do not know how many of those explosions are horrendous tragedies. But we do know that a supernova devastates all the planets that may be orbiting it, wiping out all life that may exist there — including any intelligent beings, unless they have technology far superior to ours. Its neutrino radiation alone would kill a human at a range of billions of kilometres, even if that entire distance were filled with lead shielding. Yet we owe our existence to supernovae: they are the source, through transmutation, of most of the elements of which our bodies, and our planet, are composed.

There are phenomena that outshine supernovae. In March 2008 an X-ray telescope in Earth orbit detected an explosion of a type known as a ‘gamma-ray burst’, 7.5 billion light years away. That is halfway across the known universe. It was probably a single star collapsing to form a black hole — an object whose gravity is so intense that not even light can escape from its interior. The explosion was intrinsically brighter than a million supernovae, and would have been visible with the naked eye from Earth — though only faintly and for only a few seconds, so it is unlikely that anyone here saw it. Supernovae last longer, typically fading on a timescale of months, which allowed astronomers to see a few in our galaxy even before the invention of telescopes.

Another class of cosmic monsters, the intensely luminous objects known as quasars, are in a different league. Too distant to be seen with the naked eye, they can outshine a supernova for millions of years at a time. They are powered by massive black holes at the centres of galaxies, into which entire stars are falling — up to several per day for a large quasar — shredded by tidal effects as they spiral in. Intense magnetic fields channel some of the gravitational energy back out in the form of jets of high-energy particles, which illuminate the surrounding gas with the power of a trillion suns.

Conditions are still more extreme in the black hole’s interior (within the surface of no return known as the ‘event horizon’), where the very fabric of space and time may be being ripped apart. All this is happening in a relentlessly expanding universe that began about fourteen billion years ago with an all-encompassing explosion, the Big Bang, that makes all the other phenomena I have described seem mild and inconsequential by comparison. And that whole universe is just a sliver of an enormously larger entity, the multiverse, which includes vast numbers of such universes.

The physical world is not only much bigger and more violent than it once seemed, it is also immensely richer in detail, diversity and incident. Yet it all proceeds according to elegant laws of physics that we understand in some depth. I do not know which is more awesome: the phenomena themselves or the fact that we know so much about them.

How do we know? One of the most remarkable things about science is the contrast between the enormous reach and power of our best theories and the precarious, local means by which we create them. No human has ever been at the surface of a star, let alone visited the core where the transmutation happens and the energy is produced. Yet we see those cold dots in our sky and know that we are looking at the white-hot surfaces of distant nuclear furnaces. Physically, that experience consists of nothing other than our brains responding to electrical impulses from our eyes. And eyes can detect only light that is inside them at the time. The fact that the light was emitted very far away and long ago, and that much more was happening there than just the emission of light — those are not things that we see. We know them only from theory.

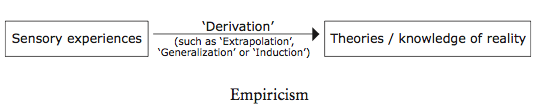

Scientific theories are explanations: assertions about what is out there and how it behaves. Where do these theories come from? For most of the history of science, it was mistakenly believed that we ‘derive’ them from the evidence of our senses — a philosophical doctrine known as empiricism:

For example, the philosopher John Locke wrote in 1689 that the mind is like ‘white paper’ on to which sensory experience writes, and that that is where all our knowledge of the physical world comes from. Another empiricist metaphor was that one could read knowledge from the ‘Book of Nature’ by making observations. Either way, the discoverer of knowledge is its passive recipient, not its creator.

But, in reality, scientific theories are not ‘derived’ from anything. We do not read them in nature, nor does nature write them into us. They are guesses — bold conjectures. Human minds create them by rearranging, combining, altering and adding to existing ideas with the intention of improving upon them. We do not begin with ‘white paper’ at birth, but with inborn expectations and intentions and an innate ability to improve upon them using thought and experience. Experience is indeed essential to science, but its role is different from that supposed by empiricism. It is not the source from which theories are derived. Its main use is to choose between theories that have already been guessed. That is what ‘learning from experience’ is.

However, that was not properly understood until the mid twentieth century with the work of the philosopher Karl Popper. So historically it was empiricism that first provided a plausible defence for experimental science as we now know it. Empiricist philosophers criticized and rejected traditional approaches to knowledge such as deference to the authority of holy books and other ancient writings, as well as human authorities such as priests and academics, and belief in traditional lore, rules of thumb and hearsay. Empiricism also contradicted the opposing and surprisingly persistent idea that the senses are little more than sources of error to be ignored. And it was optimistic, being all about obtaining new knowledge, in contrast with the medieval fatalism that had expected everything important to be known already. Thus, despite being quite wrong about where scientific knowledge comes from, empiricism was a great step forward in both the philosophy and the history of science. Nevertheless, the question that sceptics (friendly and unfriendly) raised from the outset always remained: how can knowledge of what has not been experienced possibly be ‘derived’ from what has? What sort of thinking could possibly constitute a valid derivation of the one from the other? No one would expect to deduce the geography of Mars from a map of Earth, so why should we expect to be able to learn about physics on Mars from experiments done on Earth? Evidently, logical deduction alone would not do, because there is a logical gap: no amount of deduction applied to statements describing a set of experiences can reach a conclusion about anything other than those experiences.

The conventional wisdom was that the key is repetition: if one repeatedly has similar experiences under similar circumstances, then one is supposed to ‘extrapolate’ or ‘generalize’ that pattern and predict that it will continue. For instance, why do we expect the sun to rise tomorrow morning? Because in the past (so the argument goes) we have seen it do so whenever we have looked at the morning sky. From this we supposedly ‘derive’ the theory that under similar circumstances we shall always have that experience, or that we probably shall. On each occasion when that prediction comes true, and provided that it never fails, the probability that it will always come true is supposed to increase. Thus one supposedly obtains ever more reliable knowledge of the future from the past, and of the general from the particular. That alleged process was called ‘inductive inference’ or ‘induction’, and the doctrine that scientific theories are obtained in that way is called inductivism. To bridge the logical gap, some inductivists imagine that there is a principle of nature — the ‘principle of induction’ — that makes inductive inferences likely to be true. ‘The future will resemble the past’ is one popular version of this, and one could add ‘the distant resembles the near,’ ‘the unseen resembles the seen’ and so on.

But no one has ever managed to formulate a ‘principle of induction’ that is usable in practice for obtaining scientific theories from experiences. Historically, criticism of inductivism has focused on that failure, and on the logical gap that cannot be bridged. But that lets inductivism off far too lightly. For it concedes inductivism’s two most serious misconceptions.

First, inductivism purports to explain how science obtains predictions about experiences. But most of our theoretical knowledge simply does not take that form. Scientific explanations are about reality, most of which does not consist of anyone’s experiences. Astrophysics is not primarily about us (what we shall see if we look at the sky), but about what stars are: their composition and what makes them shine, and how they formed, and the universal laws of physics under which that happened. Most of that has never been observed: no one has experienced a billion years, or a light year; no one could have been present at the Big Bang; no one will ever touch a law of physics — except in their minds, through theory. All our predictions of how things will look are deduced from such explanations of how things are. So inductivism fails even to address how we can know about stars and the universe, as distinct from just dots in the sky.

The second fundamental misconception in inductivism is that scientific theories predict that ‘the future will resemble the past’, and that ‘the unseen resembles the seen’ and so on. (Or that it ‘probably’ will.) But in reality the future is unlike the past, the unseen very different from the seen. Science often predicts — and brings about — phenomena spectacularly different from anything that has been experienced before. For millennia people dreamed about flying, but they experienced only falling. Then they discovered good explanatory theories about flying, and then they flew — in that order. Before 1945, no human being had ever observed a nuclear-fission (atomic-bomb) explosion; there may never have been one in the history of the universe. Yet the first such explosion, and the conditions under which it would occur, had been accurately predicted — but not from the assumption that the future would be like the past. Even sunrise — that favourite example of inductivists — is not always observed every twenty-four hours: when viewed from orbit it may happen every ninety minutes, or not at all. And that was known from theory long before anyone had ever orbited the Earth.

No comments:

Post a Comment